The partnership between Intel and Google Cloud has entered a new phase, focusing on building better AI infrastructure. As artificial intelligence continues to grow, companies need systems that are not only powerful but also efficient and scalable. This collaboration aims to deliver exactly that.

A Long-Term Partnership Driving Innovation

Intel and Google have worked together for many years, and this relationship has helped shape modern cloud computing. With the latest announcement, both companies are strengthening their efforts to support AI workloads at scale.

Google Cloud continues to rely on Intel Xeon processors to power its infrastructure. These processors are used across multiple cloud instances, ensuring reliable performance for different types of workloads. This long-term partnership gives Google the confidence to handle growing AI demands.

Why Intel Xeon Matters for AI Growth

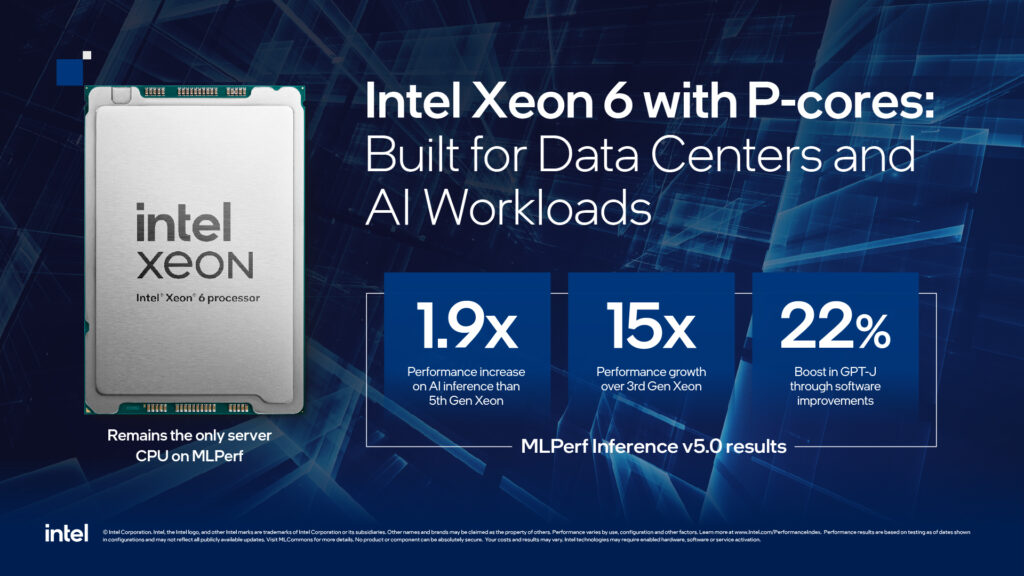

AI systems are becoming more complex every day. They require strong coordination between different hardware components. Intel Xeon processors play a key role in this process by handling core tasks such as data processing and system control.

Some key benefits of Intel Xeon processors include:

- High performance for diverse workloads

- Better energy efficiency

- Strong system-level control

- Support for both AI and general computing

These features make Xeon processors a reliable choice for cloud platforms like Google Cloud.

The Growing Role of IPUs in Cloud Infrastructure

Along with CPUs, the partnership also focuses on Infrastructure Processing Units (IPUs). These are custom-built components designed to handle specific tasks within data centers.

IPUs help by taking over tasks like:

- Networking

- Storage management

- Security operations

By offloading these responsibilities from CPUs, IPUs improve overall system efficiency and allow better use of computing resources.

Comparison: Xeon CPUs vs IPUs

| Feature | Intel Xeon CPUs | IPUs (Infrastructure Units) |

|---|---|---|

| Primary Function | General-purpose computing | Task-specific acceleration |

| Workload Type | All workloads | Infrastructure tasks |

| Flexibility | High | Limited but efficient |

| System Impact | Core processing | Resource optimization |

| Role in AI | Coordination and control | Performance enhancement |

This combination creates a balanced system that is both powerful and efficient.

Improving AI Performance at Scale

Google Cloud uses Intel Xeon processors in its latest instances, including C4 and N4. These systems support a wide range of applications, from AI training to real-time inference.

With the addition of IPUs, performance improves even further. Systems become more predictable, and resources are used more effectively. This helps businesses run AI applications without unnecessary delays or high costs.

Building the Future of AI Infrastructure

The collaboration between Intel and Google Cloud is focused on long-term growth. Instead of relying only on accelerators, they are creating balanced systems that combine general-purpose and specialized computing.

This approach helps in:

- Reducing system complexity

- Improving scalability

- Lowering operational costs

- Enhancing overall performance

As AI continues to expand, such innovations will become even more important.

Conclusion

Intel Xeon and Google Cloud are building a stronger partnership to support the future of AI. By combining powerful CPUs with specialized IPUs, they are creating systems that are efficient, scalable, and ready for growing demands.

This collaboration is not just about improving technology today. It is about preparing for the next wave of AI innovation, where performance and efficiency go hand in hand.